How zero-knowledge proofs can make AI fairer

Opinion by: Rob Viglione, co-founder and CEO of Horizen LabsCan you trust your AI to be unbiased? A recent research paper suggests it’s a little more complicated. Unfortunately, bias isn’t just a bug — it’s a persistent feature without proper cryptographic guardrails.A September 2024 study from Imperial College London shows how zero-knowledge proofs (ZKPs) can help companies verify that their machine learning (ML) models treat all demographic groups equally while still keeping model details and user data private. Zero-knowledge proofs are cryptographic methods that enable one party to prove to another that a statement is true without revealing any additional information beyond the statement’s validity. When defining “fairness,” however, we open up a whole new can of worms. Machine learning biasWith machine learning models, bias manifests in dramatically different ways. It can cause a credit scoring service to rate a person differently based on their friends’ and communities’ credit scores, which can be inherently discriminatory. It can also prompt AI image generators to show the Pope and Ancient Greeks as people of different races, like Google’s AI tool Gemini infamously did last year. Spotting an unfair machine learning (ML) model in the wild is easy. If the model is depriving people of loans or credit because of who their friends are, that’s discrimination. If it’s revising history or treating specific demographics differently to overcorrect in the name of equity, that’s also discrimination. Both scenarios undermine trust in these systems.Consider a bank using an ML model for loan approvals. A ZKP could prove that the model isn’t biased against any demographic without exposing sensitive customer data or proprietary model details. With ZK and ML, banks could prove they’re not systematically discriminating against a racial group. That proof would be real-time and continuous versus today’s inefficient government audits of private data. The ideal ML model? One that doesn’t revise history or treat people differently based on their background. AI must adhere to anti-discrimination laws like the American Civil Rights Act of 1964. The problem lies in baking that into AI and making it verifiable. ZKPs offer the technical pathway to guarantee this adherence.AI is biased (but it doesn’t have to be)When dealing with machine learning, we need to be sure that any attestations of fairness keep the underlying ML models and training data confidential. They need to protect intellectual property and users’ privacy while providing enough access for users to know that their model is not discriminatory. Not an easy task. ZKPs offer a verifiable solution. ZKML (zero knowledge machine learning) is how we use zero-knowledge proofs to verify that an ML model is what it says on the box. ZKML combines zero-knowledge cryptography with machine learning to create systems that can verify AI properties without exposing the underlying models or data. We can also take that concept and use ZKPs to identify ML models that treat everyone equally and fairly. Recent: Know Your Peer — The pros and cons of KYCPreviously, using ZKPs to prove AI fairness was extremely limited because it could only focus on one phase of the ML pipeline. This made it possible for dishonest model providers to construct data sets that would satisfy the fairness requirements, even if the model failed to do so. The ZKPs would also introduce unrealistic computational demands and long wait times to produce proofs of fairness.In recent months, ZK frameworks have made it possible to scale ZKPs to determine the end-to-end fairness of models with tens of millions of parameters and to do so provably securely. The trillion-dollar question: How do we measure whether an AI is fair?Let’s break down three of the most common group fairness definitions: demographic parity, equality of opportunity and predictive equality. Demographic parity means that the probability of a specific prediction is the same across different groups, such as race or sex. Diversity, equity and inclusion departments often use it as a measurement to attempt to reflect the demographics of a population within a company’s workforce. It’s not the ideal fairness metric for ML models because expecting that every group will have the same outcomes is unrealistic.Equality of opportunity is easy for most people to understand. It gives every group the same chance to have a positive outcome, assuming they are equally qualified. It is not optimizing for outcomes — only that every demographic should have the same opportunity to get a job or a home loan. Likewise, predictive equality measures if an ML model makes predictions with the same accuracy across various demographics, so no one is penalized simply for being part of a group. In both cases, the ML model is not putting its thumb on the scale for equity reasons but only to ensure that groups are not being discriminated against in any way. This is an eminently sensible

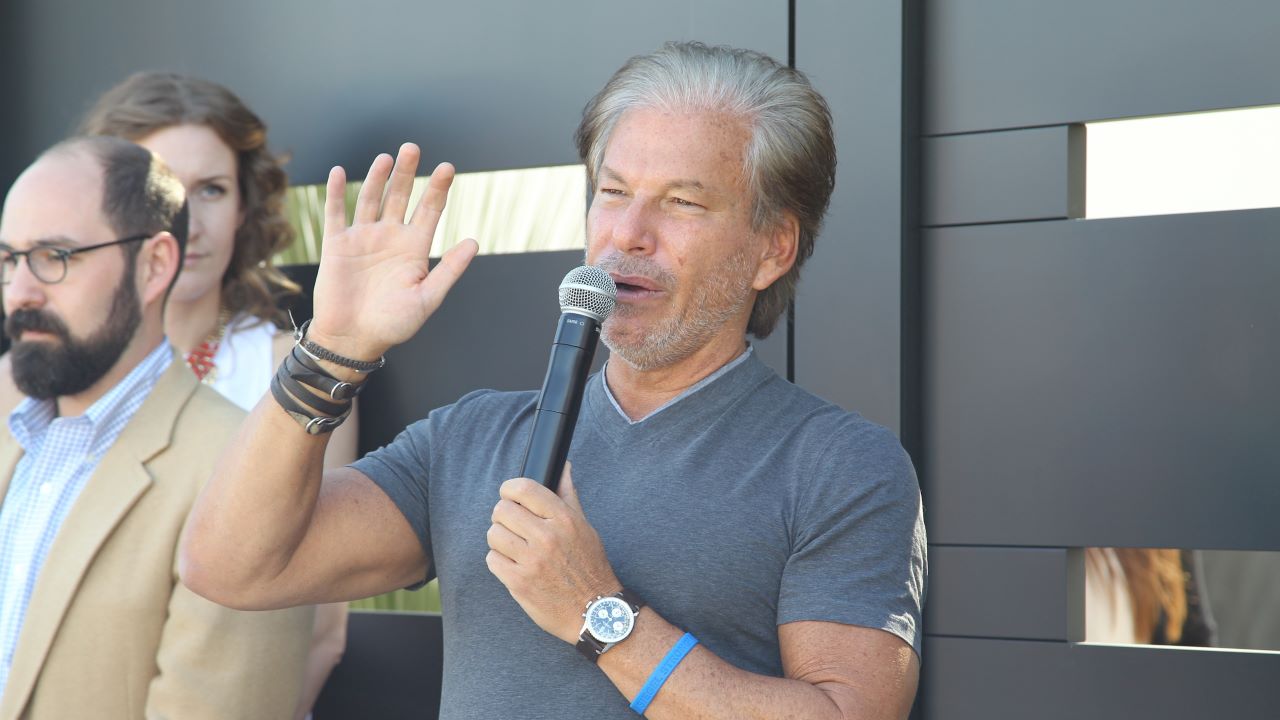

Opinion by: Rob Viglione, co-founder and CEO of Horizen Labs

Can you trust your AI to be unbiased? A recent research paper suggests it’s a little more complicated. Unfortunately, bias isn’t just a bug — it’s a persistent feature without proper cryptographic guardrails.

A September 2024 study from Imperial College London shows how zero-knowledge proofs (ZKPs) can help companies verify that their machine learning (ML) models treat all demographic groups equally while still keeping model details and user data private.

Zero-knowledge proofs are cryptographic methods that enable one party to prove to another that a statement is true without revealing any additional information beyond the statement’s validity. When defining “fairness,” however, we open up a whole new can of worms.

Machine learning bias

With machine learning models, bias manifests in dramatically different ways. It can cause a credit scoring service to rate a person differently based on their friends’ and communities’ credit scores, which can be inherently discriminatory. It can also prompt AI image generators to show the Pope and Ancient Greeks as people of different races, like Google’s AI tool Gemini infamously did last year.

Spotting an unfair machine learning (ML) model in the wild is easy. If the model is depriving people of loans or credit because of who their friends are, that’s discrimination. If it’s revising history or treating specific demographics differently to overcorrect in the name of equity, that’s also discrimination. Both scenarios undermine trust in these systems.

Consider a bank using an ML model for loan approvals. A ZKP could prove that the model isn’t biased against any demographic without exposing sensitive customer data or proprietary model details. With ZK and ML, banks could prove they’re not systematically discriminating against a racial group. That proof would be real-time and continuous versus today’s inefficient government audits of private data.

The ideal ML model? One that doesn’t revise history or treat people differently based on their background. AI must adhere to anti-discrimination laws like the American Civil Rights Act of 1964. The problem lies in baking that into AI and making it verifiable.

ZKPs offer the technical pathway to guarantee this adherence.

AI is biased (but it doesn’t have to be)

When dealing with machine learning, we need to be sure that any attestations of fairness keep the underlying ML models and training data confidential. They need to protect intellectual property and users’ privacy while providing enough access for users to know that their model is not discriminatory.

Not an easy task. ZKPs offer a verifiable solution.

ZKML (zero knowledge machine learning) is how we use zero-knowledge proofs to verify that an ML model is what it says on the box. ZKML combines zero-knowledge cryptography with machine learning to create systems that can verify AI properties without exposing the underlying models or data. We can also take that concept and use ZKPs to identify ML models that treat everyone equally and fairly.

Recent: Know Your Peer — The pros and cons of KYC

Previously, using ZKPs to prove AI fairness was extremely limited because it could only focus on one phase of the ML pipeline. This made it possible for dishonest model providers to construct data sets that would satisfy the fairness requirements, even if the model failed to do so. The ZKPs would also introduce unrealistic computational demands and long wait times to produce proofs of fairness.

In recent months, ZK frameworks have made it possible to scale ZKPs to determine the end-to-end fairness of models with tens of millions of parameters and to do so provably securely.

The trillion-dollar question: How do we measure whether an AI is fair?

Let’s break down three of the most common group fairness definitions: demographic parity, equality of opportunity and predictive equality.

Demographic parity means that the probability of a specific prediction is the same across different groups, such as race or sex. Diversity, equity and inclusion departments often use it as a measurement to attempt to reflect the demographics of a population within a company’s workforce. It’s not the ideal fairness metric for ML models because expecting that every group will have the same outcomes is unrealistic.

Equality of opportunity is easy for most people to understand. It gives every group the same chance to have a positive outcome, assuming they are equally qualified. It is not optimizing for outcomes — only that every demographic should have the same opportunity to get a job or a home loan.

Likewise, predictive equality measures if an ML model makes predictions with the same accuracy across various demographics, so no one is penalized simply for being part of a group.

In both cases, the ML model is not putting its thumb on the scale for equity reasons but only to ensure that groups are not being discriminated against in any way. This is an eminently sensible fix.

Fairness is becoming the standard, one way or another

Over the past year, the US government and other countries have issued statements and mandates around AI fairness and protecting the public from ML bias. Now, with a new administration in the US, AI fairness will likely be approached differently, returning the focus to equality of opportunity and away from equity.

As political landscapes shift, so do fairness definitions in AI, moving between equity-focused and opportunity-focused paradigms. We welcome ML models that treat everyone equally without putting thumbs on the scale. Zero-knowledge proofs can serve as an airtight way to verify ML models are doing this without revealing private data.

While ZKPs have faced plenty of scalability challenges over the years, the technology is finally becoming affordable for mainstream use cases. We can use ZKPs to verify training data integrity, protect privacy, and ensure the models we’re using are what they say they are.

As ML models become more interwoven in our daily lives and our future job prospects, college admissions and mortgages depend on them, we could use a little more reassurance that AI treats us fairly. Whether we can all agree on the definition of fairness, however, is another question entirely.

Opinion by: Rob Viglione, co-founder and CEO of Horizen Labs.

This article is for general information purposes and is not intended to be and should not be taken as legal or investment advice. The views, thoughts, and opinions expressed here are the author’s alone and do not necessarily reflect or represent the views and opinions of Cointelegraph.

What's Your Reaction?